How you safely run hundreds of AI and ML models driving better customer outcomes and growth.

As organisations strive to be leaner, fitter and more data-driven in a post-pandemic world, artificial intelligence (AI) and machine learning (ML) are increasing in priority for the enterprise, government and not-for-profits alike.

According to a recent poll, 43% of enterprises claim that AI and ML initiatives matter “more than we thought”, with half looking to up their AI and ML spending this year – 20% of them “significantly” so.

While nine out of ten top businesses now deploy AI in some form, the same proportion also admit to struggling with the tech – in turn, jeopardising their ability to scale-up AI usage.

This is no small matter, with 75% of C-levels claiming they will be out of business in five years if they do not deploy the tech more widely.

When done well, deploying AI at scale can boost efficiency and effectiveness, while enabling new products, services and business models. Done poorly, it can fail to deliver any return at all, or in the extreme, lead to reputational damage.

Deploying AI at scale involves more than addressing the issues of data flow through enhanced technical capability. In our view it also requires:

- the ability to identify where in an organisation AI can be used to improve outcomes or lower costs;

- the ability to build solutions that provide that value; and

- the ability to govern the use of AI to make sure that it is operating effectively.

How can organisations achieve this standard and successfully navigate their AI scale up journey? Here we walk through the key components, starting with dataflow, but then layering in the drivers of value creation, and the constraints that keep AI safe.

The Data Viewpoint

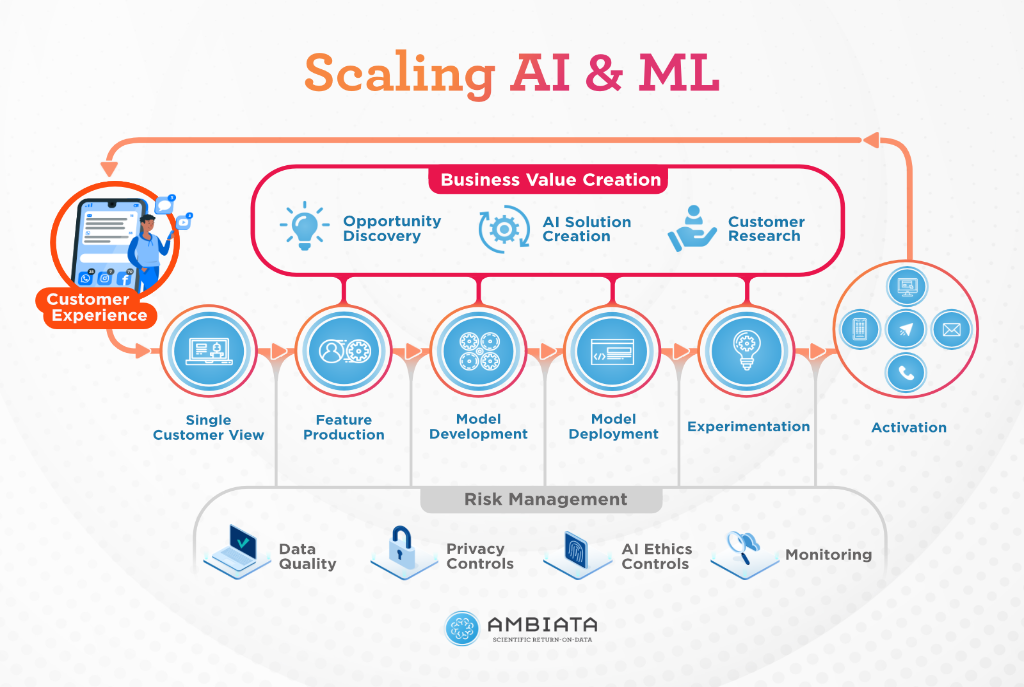

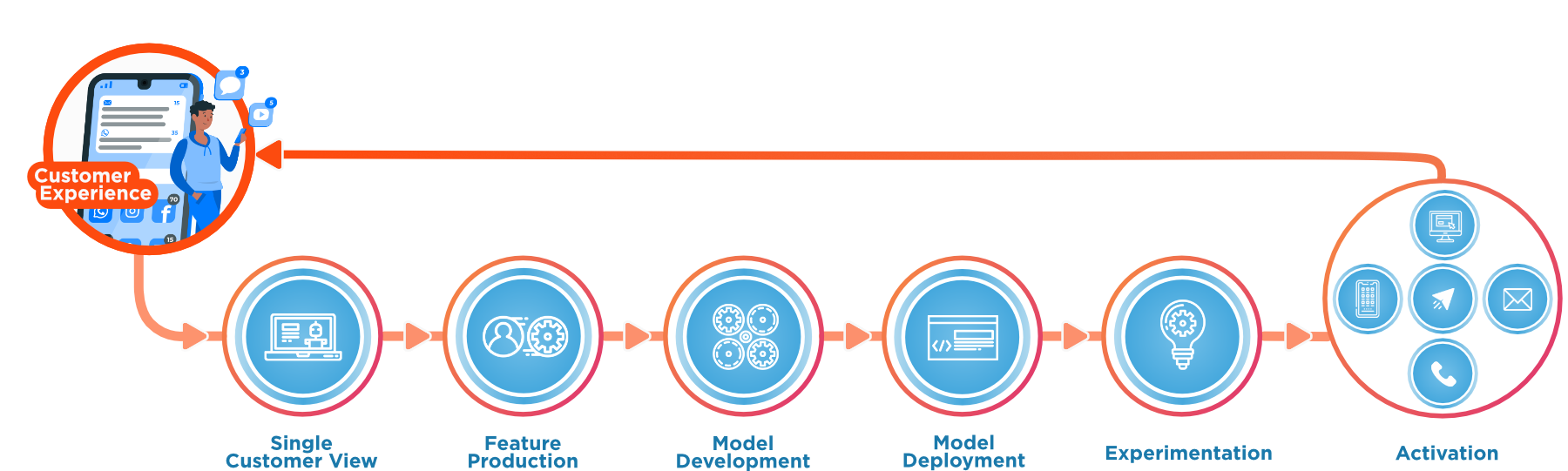

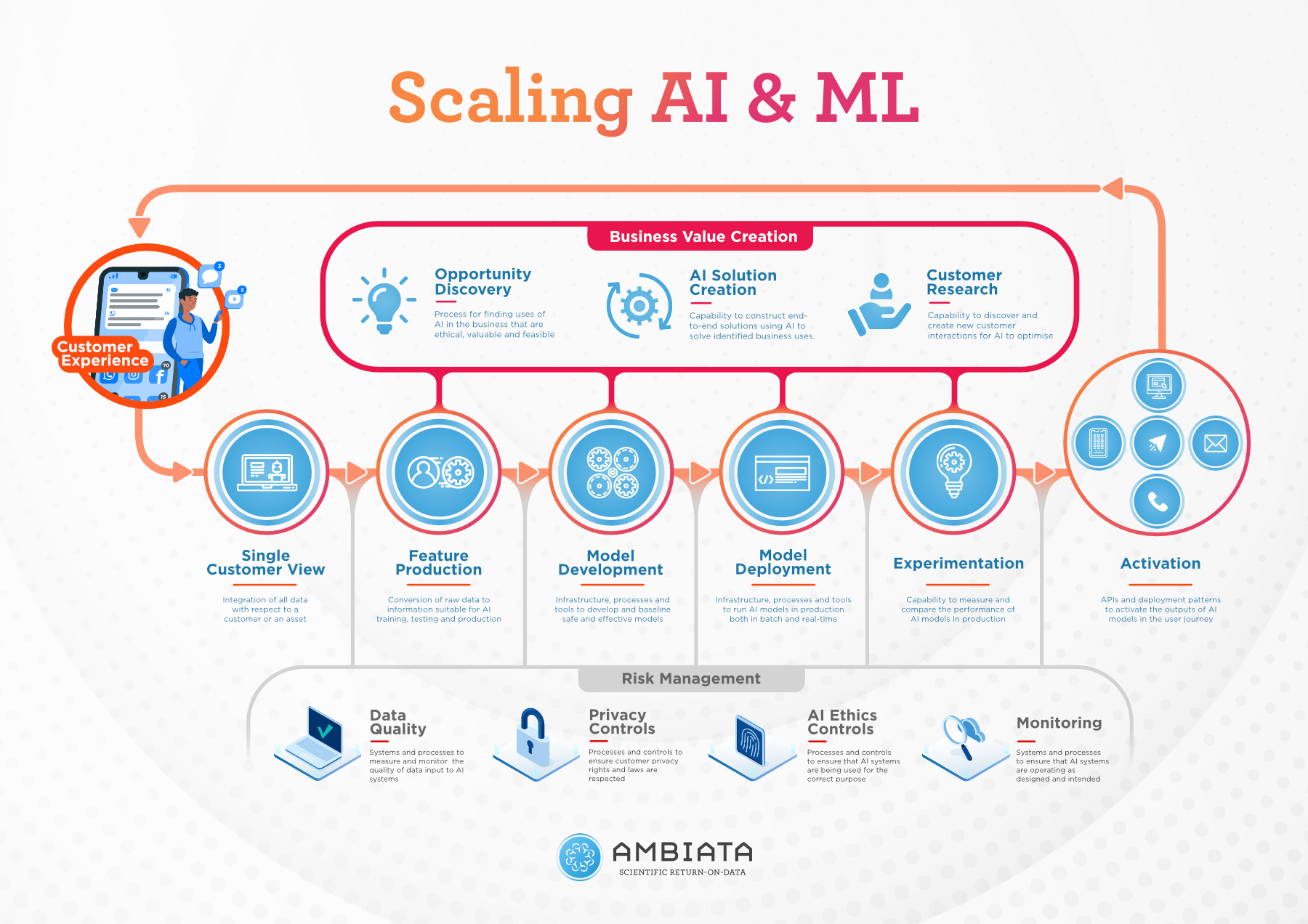

When starting out with AI and ML, organisations often start with some small scale trials of AI and machine learning. In these trials, data is extracted from some source system, a model is built, some insights are derived and presented, or more rarely, a production service is deployed. To move from these beginnings, the process needs to be industrialised and requires the components that are illustrated for the customer experience in the figure below:

Such an industrialised data loop is a self-reinforcing system – more data leads to better experience, which leads to more customer engagement, and ultimately more data. Organisations that are truly data-driven use this loop in most of their customer touch points and processes, often running hundreds of AI & ML models in production at once.

To do this reliably, six key capabilities are needed:

- Single Customer View - a single, accessible location where all of the relevant customer data is located. This step ensures all data is available for use as inputs to models.

- Feature Production - particular data needs to be readily converted into a form suitable for AI training, testing and production. These ‘features’ should be reliable, reproducible and maintainable, so they can serve multiple AI use cases.

- Model Development - the algorithms developed by data scientists and ML engineers must be built on appropriate infrastructure, processes and tools. These tools should assist with model versioning and hyperparameter tuning.

- Model Deployment - once developed, the right infrastructure, processes and tools are needed to run an AI model in production.

- Experimentation - the performance of AI models is systematically measured and compared with other models, via a ‘controlled experiment’. These experiments are no less rigorous than those carried out in the scientific field.

- Activation - AI models may be activated through different channels – for instance web apps, mobile apps and the call center - and patterns for the use in each of these channels must be established.

Deriving value from AI & ML

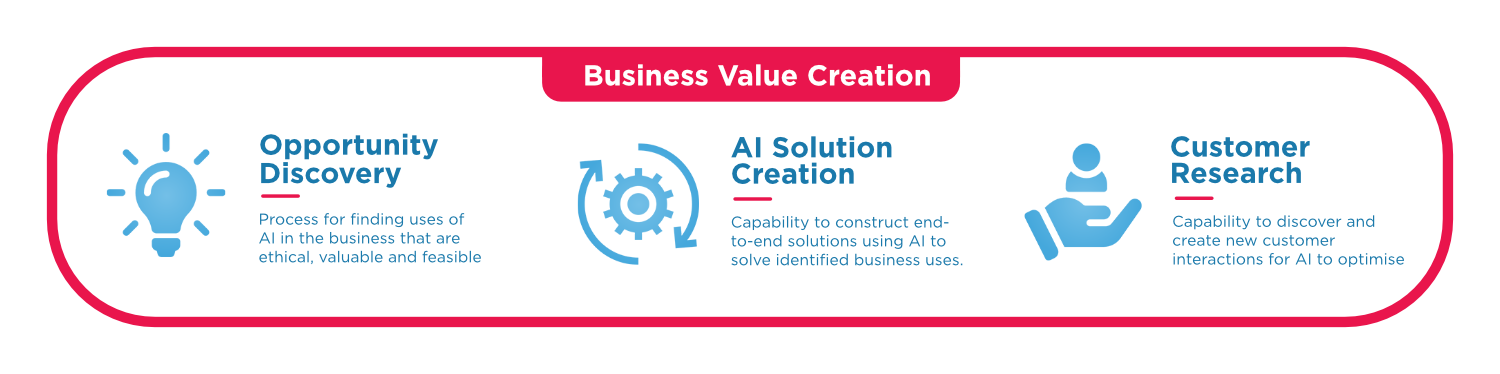

Regardless of their technical prowess, data-science teams - and data infrastructure - need to be connected to those people that understand how the business needs to improve for AI to truly deliver value. At scale, this means that appropriate ML and AI use cases need to be brought forward by the business (implying they have trust in the potential outcomes), there must be a mechanism for trialling and productionising the right use cases, and creativity around customer and process outcomes needs to be injected from the business into those use cases.

Opportunity Discovery

Firstly, organisations need to identify the key areas where data and AI can add value. This requires a structured mechanism to discover the opportunities for AI and ML in the business that are ethical, valuable and feasible. Initially, this could be a top-down approach, in which you become familiar with the common use cases that apply to your industry. Alternatively, it could be bottom-up, involving primary research with employees and customers to understand the key problems they are facing. At maturity, the business will trust and understand the value that AI and ML can provide and bring forward ideas for evaluation.

AI Solution Creation

Once a problem has been selected, the next step is to create an AI-powered solution. Many initial ideas will be infeasible - either technically or economically - so it may take some iterations to arrive at a solution worth pursuing. Establishing a team that builds AI solutions from the business backlog will help build the capability that can quickly evaluate feasibility and build MVPs to estimate value.

Customer Research

For customer facing use cases, applying AI may also require customer research, such as behavioural psychology or user experience studies. This research can help in the design of customer interventions that the AI model will ultimately generate - increasing the value of AI output. In general, customers will respond differently to variations in interventions, messages, choice structures, channels and timing.

For more info on building a plan for activating AI in your organisation, refer to our previous blogs, AI Strategy to Implementation and Removing the FUD from AI.

Managing risks in the use of AI & ML at scale

Things can go wrong with AI and ML, and it often starts right at the start - in the evaluation of the ethics of a use case. When that doesn’t match societal expectations, or there are unexpected results including disproportionate impacts on minorities, then severe reputational damage can result.

When many AI algorithms are in production and interacting with your customers, the controls in place must ensure that those algorithms are driving appropriate customer outcomes. There needs to be a broad and comprehensive approach to risk management - here we break this down into guardrails in four key areas, but much more could be said about each:

Data Quality

Ensuring the data you collect and feed into AI models is of a suitable quality to drive reliable feature production. This means controlling for biases, ensuring your data pool is of a sufficient size and is representative of the group your AI model will be targeting, and monitoring that it stays that way. At scale, these processes should be automated, and their monitoring formalised.

Privacy Controls

Ensuring that, at all stages of the AI solution development and production process, access to the data being used is appropriately managed and protected. If data was collected historically, this also means understanding and managing what purpose the data was collected for and what consents the user has given for its use. At scale, there should be formal data governance processes and automated systems controlling appropriate access to data.

AI Ethics Controls

Just because AI ‘can be’ used in a particular use case, doesn’t mean that it ‘should be’ used. In Australia, the eight AI ethics principles published by the Australian Government should be used when designing, developing, integrating or using AI systems. At scale, there should be a formal AI ethics framework and regular review of all algorithms in production.

Monitoring

Monitoring involves systems and processes to ensure that models are operating as designed and intended. This involves monitoring both the functional performance of algorithms as well as the outcomes of the use of the algorithms. For instance, an algorithm may display biases in production that are not apparent in its training - this must be measured and alerted for if it occurs.

Technology has traditionally outpaced the development of legal frameworks that seek to regulate nascent technologies and their applications. However, the pace of development in AI regulation is picking up. Recently proposed EU regulation, for example, bans AI systems that cause or are likely to cause “physical or psychological” harm through the use of “subliminal techniques” or by exploiting vulnerabilities of a specific demographic. It also prohibits public authorities from using AI for ‘social scoring’ and ‘real-time’ remote biometric identification systems, including facial recognition.

Becoming a mature AI & ML organisation

Bringing the elements of our scaled AI organisation together, we see that business value is created by understanding the customer and organisational needs and creating solutions. These solutions are built from and build on the data flowing through the organisation, and are maintained in their correct function through human and technological controls and processes.

According to Gartner’s AI Maturity Model, companies fall into five categories of maturity when it comes to their use of AI technology. Level 1 corresponds to “AI Awareness”, which we won’t include here. For the other levels, our experience of organisations suggests they have the following sorts of capabilities established:

Level 2 - “Active”

Organisations that have recently started their AI journey typically have reasonable data systems, but remain undeveloped in AI & ML systems. For instance, common pattern would be the presence of the following capabilities:

- Single Customer View - established within a data warehouse pattern

- Privacy Controls - established

- Model Development - data scientists using their own preferred tooling

- Model Deployment - ad hoc per use-case.

- Activation - through a limited number of channels or through specific tooling

Level 3 - “Operational”

Once organisations begin to industrialise their approaches to ML and AI, they bed down more systematised and standard approaches to model building and deployment. For instance, a pattern would be the established capabilities of level 1 along with

- Data Quality - automated monitoring of quality of data from key source systems

- Model Development - data scientists using standardised, supported tooling

- Model Deployment - data & ML engineers re-implementing data science models for production

- Activation - a variety of established patterns of activation (e.g. email, mobile, web, SMS)

- AI Ethics - a statement of AI Ethics principles for the organisation

- AI Solution Creation - an established team with capabilities for integrating ML and AI components into operational systems.

Levels 4 and 5 - “Systemic” and “Transformational”

Organisations that have all capabilities in place and increase their effectiveness over time. Particular features that distinguish these organisations are

- Customer Research - the integration of behavioural and UX teams with AI teams

- Monitoring - systematic evaluation of the performance of models and reporting to nominated responsible entities

- Experimentation - champion-challenger systems for models in production and automated experimentation systems

- Feature production - specific feature stores that enable back-in-time feature production and sharing or feature definitions between teams and models.

Conclusion

Successfully and safely scaling AI and ML throughout an organisation requires many different elements. Each part can be lightweight, but no part can be forgotten. Accurately appraising your AI maturity and understanding what capabilities you need to develop is an important step in scaling your use of AI.

Do you need practical help in building your AI maturity? If so, Ambiata provides services to help organisations build the capabilities to move up the AI maturity curve. Please feel free to contact us at info@ambiata.com or on the web form on our main page.